Yelp Web Scraping: The Practical 2026 Guide

You’re probably in one of two situations right now. Either you need a clean competitor list from Yelp and you’re tired of copying names, ratings, hours, and phone numbers by hand, or you already built a rough scraper and Yelp started pushing back with empty responses, inconsistent HTML, or outright blocks.

That’s where most first Yelp projects go sideways. The hard part isn’t getting one page into a CSV. The hard part is building a workflow you can trust, then turning that raw output into something a business owner, marketer, or operator can effectively use. For yelp web scraping, the primary deliverable isn’t a folder of exports. It’s a repeatable pipeline that collects public business data, organizes it, and feeds a decision-making system your team will regularly open.

The Business Case for Scraping Yelp

A leasing decision often starts with a simple question. Which businesses already own the neighborhood? A coffee shop owner can answer that by hand for one block. The process falls apart once the search expands to several ZIP codes and the owner needs more than names and star ratings.

Yelp matters because it combines directory data with customer language. A listing shows who operates in an area, how they position themselves, when they are open, and what customers mention repeatedly in reviews. That mix is useful for market research, competitor tracking, local lead generation, and service gap analysis.

What businesses actually pull from Yelp

Early scraping projects usually start with the obvious fields. Business name, category, rating, address, phone number. Those fields help, but they rarely justify the maintenance cost of a scraper on their own.

The stronger business cases come from combining listing data with review text and operational details, then tying that output to a clear decision:

- Competitive mapping. Identify which businesses appear repeatedly for a category, neighborhood, or search phrase.

- Lead generation. Find local businesses that match a target profile but still show weak branding, thin review volume, or inconsistent profile coverage.

- Hours and service positioning. Compare who opens early, stays open late, offers delivery, or covers a niche that nearby competitors ignore.

- Sentiment themes. Group review language into recurring praise and complaints, then use that for positioning, outreach, or product changes.

- Brand monitoring. Track how your own locations are described against nearby alternatives over time.

That is the difference between collecting data and using it.

A freelancer can turn this into a prospect list. A multi-location operator can compare neighborhood saturation before opening a new site. A marketing team can spot common complaints in a category and build campaigns around the gap.

Practical rule: If the data will not change a decision, do not scrape it.

I give junior engineers the same advice on first projects. Start with the business question, then define the minimum schema needed to answer it. Pulling every visible field usually creates cleanup work without improving the final analysis.

The difference between raw extraction and useful intelligence

Many tutorials stop too early at the CSV export. That file may satisfy a scraping demo, but it does not help much once a sales lead needs follow-up, an operator needs weekly review monitoring, or a founder wants one place to review competitors and assign next steps.

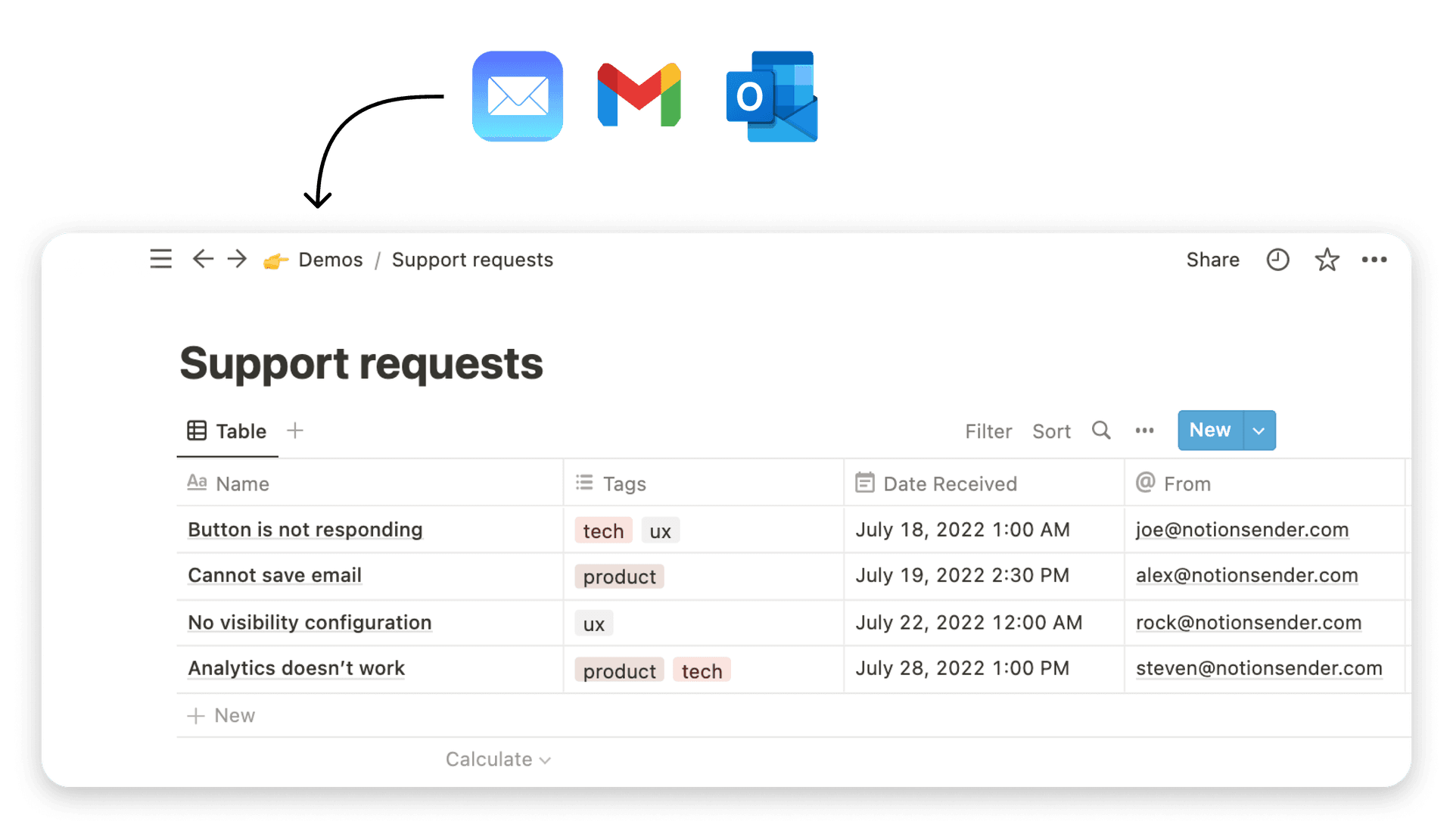

The useful version of a Yelp pipeline ends in a system the team already checks. In practice, that often means a Notion database with filtered views, status fields, owner assignments, and simple summaries by category or area. If the workflow also triggers outreach or internal follow-up, this guide on creating and sending email from Notion shows how that operational layer can sit next to the scraped data.

That end-to-end workflow is the primary business case. Yelp scraping is not just about extracting listings. It is about turning public business data into something a team can review, act on, and maintain without rebuilding the process every week.

Yelp API vs Direct Scraping Which Path to Take

Before you touch selectors or proxies, decide whether you need scraping. A lot of projects don’t. Yelp already offers the Fusion API, and if your requirements fit inside its limits, using it will save you time, maintenance, and legal exposure.

The problem is that many business intelligence projects need more depth than the API provides. Yelp’s Fusion API is capped at 5,000 calls per day on the free tier and returns a maximum of 3 reviews per business, which makes it too thin for broad review analysis according to Octoparse’s discussion of Yelp scraping legality and API limits.

Use the API when your needs are narrow and stable

If you need a compliant directory of businesses with stable metadata, the API is usually the right first move. It works well for things like:

| Need | API fit |

|---|---|

| Basic business listings | Strong |

| Business IDs and names | Strong |

| Ratings and standard fields | Strong |

| Deep review analysis | Weak |

| Large-scale sentiment work | Weak |

The API path is best when your output looks like a CRM seed list, location lookup, or a light competitor database. You get cleaner JSON, fewer broken workflows, and less engineering overhead.

There’s also a maintenance advantage. When Yelp changes front-end markup, API clients don’t care. HTML scrapers do.

Use direct scraping when review depth matters

If your project depends on review text, review volume, or page-level business details that aren’t fully available through the API, direct scraping becomes the practical path. This is common in projects like:

- Sentiment analysis pipelines that need more than the tiny review sample the API returns

- Reputation benchmarking across many businesses in one category

- Service intelligence where page content contains details not exposed cleanly elsewhere

- Local market studies that depend on how businesses present themselves to users

Yelp web scraping earns its complexity. You’re trading official access for flexibility.

The API is for reliability. Scraping is for depth.

That’s the decision in one line.

A decision framework that actually helps

Use this checklist before you choose:

-

Start with the business question

If the question is “Which plumbers operate in this city?” the API may be enough. If the question is “Why do top-rated plumbers get better reviews?” you’ll likely need scraped review text. -

Estimate how often data must refresh

A one-time research pull is different from a weekly dashboard. Repeated scraping requires stronger operational discipline. -

Check your tolerance for maintenance

Scrapers break. APIs fail too, but they usually fail in more predictable ways. -

Decide what happens after extraction

If the data feeds a dashboard, alerting workflow, or outreach system, consistency matters more than scraping every possible field.

My default recommendation

For most first projects, use a hybrid approach. Seed the pipeline with API data where possible, then scrape only the page-level details you can’t get any other way. That reduces fragility and keeps your scraper focused.

This also improves how you think about architecture. Instead of “scrape Yelp,” the job becomes:

- fetch candidate businesses

- enrich selected listings

- normalize fields

- store output

- push insights into the team workflow

That’s a much better pattern than crawling blindly and hoping the data turns out useful.

Your Python Toolkit for Scraping Yelp Data

Most Yelp projects don’t need a huge stack. They need a clean one. Start simple, keep dependencies obvious, and don’t reach for a browser unless the page forces you to.

The minimal project setup

A practical starter structure looks like this:

project/scraper/parsers/outputs/notion/config/main.pyrequirements.txt

Then create a virtual environment and install the basics:

requestsbeautifulsoup4lxmlpandaspython-dotenv

That stack handles a lot of useful work. It’s fast, readable, and easy to debug.

Requests and BeautifulSoup for the first pass

Use requests when you can fetch useful HTML or JSON without a real browser. Use BeautifulSoup to parse returned markup into fields your script can work with. This pair is ideal for:

- search result pages that expose structured data cleanly

- detail pages where the content is present in server responses

- prototypes where you want fast iteration

Don’t overcomplicate this stage. If the page returns what you need, a browser is unnecessary overhead.

A junior mistake I see often is choosing Selenium first because it feels closer to how a human browses. That makes debugging slower and scaling harder. Start with plain HTTP requests. Promote to a browser only when you confirm the page depends on JavaScript execution or interaction patterns.

When to bring in Selenium or Playwright

Use browser automation for the parts of Yelp that don’t render cleanly through direct requests, or when you need to behave more like a real user session. Selenium works. Playwright usually feels more modern and easier to manage for scraping projects with dynamic rendering.

Browser tools help when you need to:

- execute JavaScript before content appears

- click “load more” patterns

- preserve session state

- inspect network activity while replicating human interaction

Here’s a solid walkthrough to watch before you start wiring browser automation into production:

<iframe width="100%" style="aspect-ratio: 16 / 9;" src="https://www.youtube.com/embed/mn6aj3JitVo" frameborder="0" allow="autoplay; encrypted-media" allowfullscreen></iframe>

A practical stack by project stage

| Stage | Recommended tools |

|---|---|

| Prototype | requests, BeautifulSoup |

| Dynamic pages | Playwright or Selenium |

| Cleaning and shaping | pandas |

| Secrets and config | python-dotenv |

| Output to apps | requests or SDK clients |

If your team doesn’t have in-house scraping experience, it can help to bring in experienced python developers who’ve already built and maintained web data pipelines. That matters more for reliability than for the initial prototype.

A scraper is easy to demo. It’s harder to keep alive after the target site changes.

Preparing for downstream delivery

Don’t treat extraction and delivery as separate projects. Design the schema early. Decide what each row represents, what makes it unique, and what fields are optional.

If you know you’ll later send records into Notion, map your output to that destination before you write too much parser logic. The Notion API documentation is useful here because it forces you to think in terms of database properties, page payloads, and field types rather than loose JSON blobs.

A clean starter record for Yelp usually includes:

- source URL

- business name

- category or search term

- location

- rating text

- review snippet or review body

- scrape timestamp

- parser version

That last field matters. When Yelp changes markup, parser versioning helps you track when records started drifting.

Navigating Yelp's Anti-Scraping Defenses

Start with a realistic expectation. Yelp is one of those targets where a scraper can look fine in a demo and still fail in production after a few hundred requests. The issue is rarely the first parser. It is staying collectable long enough to produce data you trust in a dashboard.

That matters even more if the end goal is business intelligence in Notion rather than a one-off CSV. Bad fetches do not just lower coverage. They create holes in the records your team will later sort, tag, and act on through the Notion API or a delivery layer like NotionSender.

Why simple scripts fail early

A beginner usually starts with requests.get(), a copied user agent, and a fixed sleep interval. That approach creates a traffic pattern that is easy to classify. Yelp can check more than headers. Session continuity, request pacing, client fingerprint consistency, and whether the page behavior matches a normal browser session all matter.

JavaScript is part of the problem too. Some pages expose enough HTML to make you think extraction is working, while important fields load later or change by page type. If you scrape only the first response and never verify what rendered, you can miss records without noticing.

Use a simple rule. If the scraper runs cleanly but sampled output looks thin, suspiciously repetitive, or partially empty, assume collection is failing before you blame the parser.

What a stronger request strategy looks like

Good defense handling comes from stacking several controls together. No single trick carries this.

Render only when required

Browser automation is expensive, so do not send every request through Playwright by default. First inspect the page in DevTools. Check XHR calls, inspect embedded JSON, and compare raw HTML against the rendered DOM. If the required fields are already present in the server response, stay with plain requests. If key content appears only after scripts run, render that page type and nowhere else.

That keeps cost and block risk lower.

The broader patterns are covered well in these modern web scraping best practices, but the short version is simple. Match the collection method to the page behavior.

Keep identity coherent

Proxy rotation helps, but rotating IPs alone is not enough. A believable session has internal consistency. Headers should match the browser family you claim to be using. Viewport, language, timezone, cookie handling, and navigation flow should fit together.

Useful controls include:

- session-based proxy assignment instead of changing IP on every request

- realistic header sets, not a random grab bag

- stable cookies across related page visits

- viewport and locale settings that fit the proxy geography

- limited concurrency per session

Silent misalignment is the most dangerous bug.

I have seen teams rotate residential IPs correctly and still get poor results because the browser fingerprint stayed rigid across thousands of sessions. The scraper looked distributed at the network layer and obviously automated everywhere else.

Vary pacing and path

Fixed delays create a signature. So does hitting only deep business URLs with no search or category navigation before them.

A better pattern is to model short browsing flows:

- open a search results page

- wait for a variable interval

- click or request a business page

- allow page assets to settle

- extract fields

- pause before the next transition

The goal is not to imitate a human perfectly. The goal is to stop advertising that every action came from a scheduler firing on exact intervals.

Monitor collection quality while the job runs

Without monitoring, scraping turns into guesswork. Track signals that tell you whether the fetch layer is degrading before the parser starts producing garbage.

| Signal | Likely meaning |

|---|---|

| Empty HTML with 200 responses | soft block or incomplete rendering |

| Sudden redirect spikes | unstable session or challenge flow |

| CAPTCHA pages in sample logs | fingerprint or proxy issue |

| Selector miss rate jumps | markup changed or content shape shifted |

| Duplicate businesses across pages | pagination, retry, or state bug |

Alert on those conditions early. Do not wait until the Notion database fills with partial records and conflicting entries.

Scale in a way that preserves trust

For a first Yelp project, smaller and cleaner beats wider and noisier. Crawl one city, one category, or one review slice. Save raw responses. Sample records by hand. Then increase volume after you know which failures are fetch problems, which are parser problems, and which are normal gaps in the source.

That discipline pays off later. When stakeholders open the Notion dashboard, they should be looking at review trends, weak locations, and competitor patterns they can act on. They should not be debating whether half the missing ratings came from blocked sessions.

A few habits consistently save time:

- separate fetching, parsing, and delivery into different steps

- log request metadata with each saved response

- version session settings and parser logic

- cap retries until you understand why requests fail

- validate output against live pages on a fixed sample

The junior goal is to collect pages. The production goal is to deliver records that stay reliable all the way through analysis and into a Notion workflow people can use.

Turning Raw HTML into Structured Insights

Once you’ve fetched pages reliably, the next job is turning messy markup into records you can analyze. Many beginners lose discipline at this stage. They scrape text directly into spreadsheets, skip validation, and only notice problems after the dashboard looks wrong.

The better pattern is simple. Parse into a stable Python structure first. Validate second. Export last.

Build a parser around fields, not page appearance

Start by opening a saved Yelp response and identifying the exact fields that matter for the business question. Don’t parse everything visible on the screen. Parse a schema.

A useful review-oriented schema might include:

- business_name

- business_url

- address

- phone

- rating_text

- review_count_text

- review_author

- review_text

- scrape_date

That keeps the parser focused. If you later add sentiment or tags, do that in a downstream transform step.

A practical BeautifulSoup pattern

Here’s a simplified parser shape using BeautifulSoup:

from bs4 import BeautifulSoup

import re

def parse_business_page(html, url):

soup = BeautifulSoup(html, "lxml")

record = {

"source_url": url,

"business_name": None,

"rating_text": None,

"review_count_text": None,

"phone": None,

"address": None,

}

h1 = soup.find("h1")

if h1:

record["business_name"] = h1.get_text(strip=True)

rating_tag = soup.find("div", attrs={"aria-label": re.compile("star rating")})

if rating_tag:

record["rating_text"] = rating_tag.get("aria-label")

review_count = soup.find("span", string=re.compile("reviews"))

if review_count:

record["review_count_text"] = review_count.get_text(strip=True)

phone_label = soup.find("p", string="Phone number")

if phone_label and phone_label.next_sibling:

record["phone"] = phone_label.next_sibling.get_text(strip=True)

directions_link = soup.find("a", string="Get Directions")

if directions_link and directions_link.parent and directions_link.parent.next_sibling:

record["address"] = directions_link.parent.next_sibling.get_text(strip=True)

return record

This style works because it treats every field as optional. That matters. Yelp pages vary, and a parser that assumes every element exists will crash or produce shifted garbage.

Parse defensively. Missing data is normal. Silent misalignment is the real bug.

Store clean intermediate data

After parsing, save records into a list of dictionaries. That structure is easy to inspect, filter, and export.

records = []

for page in fetched_pages:

parsed = parse_business_page(page["html"], page["url"])

records.append(parsed)

Then export with pandas:

import pandas as pd

df = pd.DataFrame(records)

df.to_csv("outputs/yelp_businesses.csv", index=False)

df.to_json("outputs/yelp_businesses.json", orient="records", force_ascii=False)

CSV is good for quick review and stakeholder handoff. JSON is better if another app, script, or automation step needs to consume the records.

Add a validation layer before export

Before you save final output, run a few checks:

- Required field checks for source URL and business name

- Length checks so obviously broken text gets flagged

- Deduping rules based on a stable key

- Sampling review where you inspect real outputs manually

If you want a broader framework for parser hygiene and production readiness, ScreenshotEngine’s guide to modern web scraping best practices is worth reading.

A parser should produce records you can trust, not just records you can count.

From Scraped Data to an Actionable Notion Dashboard

A CSV proves the scraper works. It doesn’t help much on Tuesday morning when someone wants to know which competitor has the strongest review trend in a target neighborhood.

The operational move is to load that data into a Notion database where non-technical teammates can sort, filter, comment, and act on it. For small teams, that’s often enough. You don’t need a full BI stack to get value from scraped Yelp data.

Option one using the Notion API

The direct engineering route is to push your cleaned records into a Notion database through the API. Each business becomes a page. Each parsed field maps to a property.

A practical schema in Notion might include:

| Notion property | Yelp field |

|---|---|

| Name | business_name |

| URL | source_url |

| Location | address |

| Rating | rating_text |

| Review count | review_count_text |

| Notes | review summary or sentiment |

| Last scraped | scrape date |

In Python, the workflow is straightforward:

- clean and normalize records

- map fields to Notion property types

- create or update pages

- log failures for retries

This works best when you want full control over deduping and updates. It also lets you enrich records before delivery, such as adding tags like “late hours,” “strong dessert reviews,” or “needs manual verification.”

Option two using an email-based workflow

Sometimes the cleaner solution isn’t another API client. It’s email.

If your scraper already produces daily summaries, filtered lists, or review digests, sending those results into Notion through an email-to-database workflow can be easier for a small team. A scheduled script can package the output into a readable report, then deliver it into a connected Notion workspace. That’s especially handy for recurring competitor snapshots and lightweight monitoring.

This walkthrough on sending email to Notion shows the underlying workflow pattern well.

The best dashboard is the one your team updates and reads without asking engineering for help.

What the dashboard should actually show

Don’t dump raw records into Notion and call it a dashboard. Build views around decisions.

Useful views include:

- Competitor tracker sorted by category and neighborhood

- Review theme board for recurring praise and complaints

- Lead list filtered by business type and missing website or weak listing quality

- Refresh queue showing records that need a new scrape

The project becomes useful to operators, consultants, and founders. The scraper gathers data. The Notion layer turns it into a review workflow.

The Legal and Ethical Guide to Yelp Scraping

A Yelp scraper usually works fine right up until it becomes useful. Then someone asks for daily refreshes, broader coverage, and review text at scale. That is the point where legal and ethical decisions stop being theoretical and start shaping the design of the pipeline.

Yelp’s Terms of Service prohibit scraping. That alone should change how the project is scoped. Treat Yelp collection as a constrained internal research workflow with explicit limits, not as a general-purpose data acquisition system.

The practical question is not “can this be scraped?” It is “what is the smallest amount of scraping that solves the business problem without creating avoidable legal, operational, or reputational risk?”

A workable standard for internal use

Generally, the safest posture is narrow collection tied to a clear business purpose. If the goal is competitor monitoring, lead qualification, or review trend analysis for internal decisions, keep the scope aligned with that purpose and document it. If the goal is to republish Yelp content, build a public-facing review database, or clone Yelp’s listings and reviews into your own product, stop there. That is where the risk profile changes fast.

I recommend a few ground rules:

- Use the API where it covers the field you need

- Scrape only fields the API does not provide

- Collect the minimum page volume needed for the analysis

- Rate-limit requests conservatively

- Avoid storing unnecessary personal data from reviews or profiles

- Log what was collected, when, and why

- Set retention rules instead of keeping everything forever

Those controls help with more than legal exposure. They also produce a cleaner dataset for the dashboard work that follows. A Notion database filled with duplicated listings, stale reviews, and scraped text nobody is allowed to reuse is not useful business intelligence.

Ethics shows up in implementation details

A scraper can be technically successful and still be poorly run.

If the job hammers listing pages every hour, ignores robots guidance, rotates through residential IPs aggressively, and copies full review text into downstream tools, that signals carelessness. A restrained workflow looks different. It fetches only the pages needed, spaces requests out, stores structured summaries where possible, and gives the team a reason for every field in the Notion dashboard.

That distinction matters. In practice, the projects that hold up best are the ones built for a narrow internal use case with clear operational boundaries.

The advice I give junior engineers

Ask these questions before adding another endpoint or another thousand URLs to the queue:

- What decision will this field support?

- Can we get it from the API instead?

- Do we need the full text, or will a derived summary do?

- How often does this page really need to be refreshed?

- Would we be comfortable explaining this collection method to legal or to the client?

If the answers are weak, trim the scope.

That discipline is also what turns scraping into a real workflow instead of a one-off script. The end product here is not a CSV sitting in Downloads. It is a maintained pipeline that feeds a Notion dashboard the team can act on, with data collection choices that are deliberate, explainable, and easier to defend.

If you want the output of your Yelp scraping workflow to land somewhere your team can use, NotionSender is worth a look. It gives you a practical way to move email-based reports and structured updates into Notion, which is useful when you’re turning scraped listings, review summaries, or competitor snapshots into a living dashboard instead of another forgotten export.